Synthesis of integral imaging based 3D-images and 3D-video

We have created a ray-tracing based simulation tool to generate 3D-images and 3D-video sequences, with a focus on Integral Imaging (II) based 3D. The tool is based around a generic 3D-camera description model and provides optically accurate rendering using the ray-tracing package MegaPOV. By using MegaPOV the scene description language of POV-Ray is fully incorporated into the tool, which allows for exact definitions of arbitrarily complex 3D-scenes. Compared to experimental research the evaluation of different static and dynamic II-techniques is greatly facilitated.

3D-camera description model

Adelson and Bergen defined in 1991 a complete representation of the visible world using the plenoptic function

F

= f (Lx, Ly, Lz, θ, φ, λ, t), (1)where

F corresponds to intensity of all light rays passing through space at location L = [Lx, Ly, Lz]T with direction (θ, φ) at time t and with wavelength λ. By transforming the light ray directions from the spherical representation (θ, φ) into the cartesian D = [Lx, Ly, Lz]T, integrating over all visible wave lengths λ using an RGB color model and using the vector notation for location and direction, eq. (1) is condensed intoF = f (L, D, t), (2)

where F now correponds to the RGB-triplet [r, g, b]T.

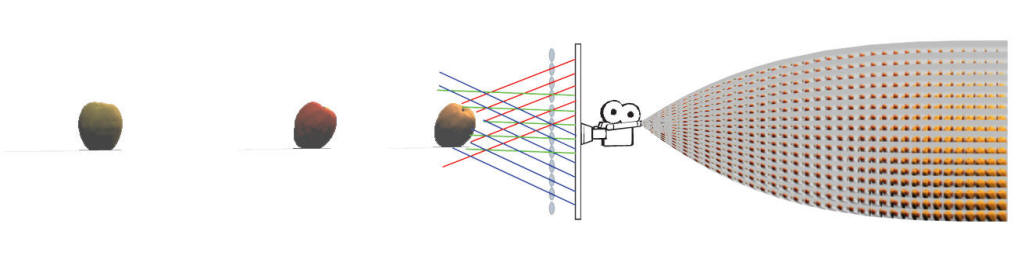

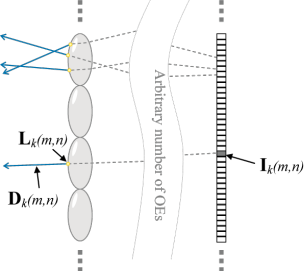

In the synthesis we use a model which is based on the trace-back of captured light rays that travel through the optical elements (OE) of the 3D-camera. For each back-traced light ray a location point L and a direction vector D is calculated, as shown in Figure 1.

Figure 1. Derivation of location points and direction vectors

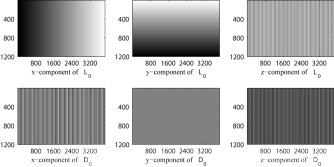

The resulting sets of L and D are arranged into pixel maps that are used by a ray-tracer to define the start and direction of all primary view-rays that are shot into a 3D-scene. That is, the ray-tracer evaluates the plenoptic function given by eq. (2) for all pixels in the resulting image I. In Figure 2 the D- and L-map for a 3840x2400 pixel lenticular 3D-camera is shown.

Figure 2. D- and L-map for a lenticular 3D-camera

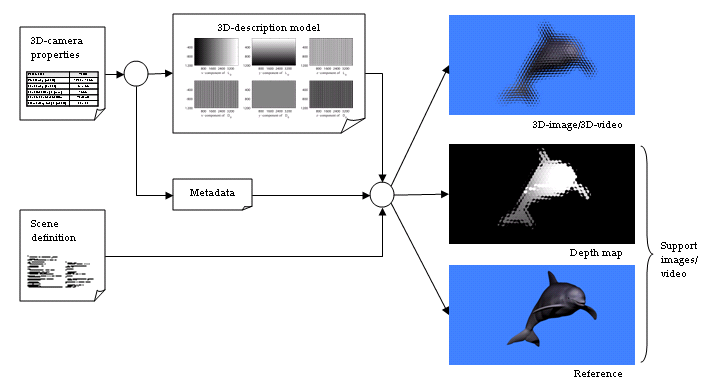

3D-scene definition and information flow

By using our 3D-camera description model (described by the D- and L-map and auxiliary meta data), the same 3D-scene definition can be used to synthesize II-based 3D-images which adhere to different II-techniques. This separation between 3D-camera and 3D-scene greatly facilitates many forms of comparative research, e.g. development of coding schemes or evaluation of depth extraction algorithms. Figure 3 shows the information of the synthesis process, where the camera and scene properties are separately defined and thus easily interchangeable.

Figure 3. Information flow from scene and camera definitions to rendered 3D-images, 3D-video and support maps.

Results ‒ 3D-camera description models

Below is a set of generated camera models (downscaled for presentation purposes) that have been used to synthesize II-based 3D-images and 3D-video.

D-map L-map

Rectangular lens array (64x64 lenses, 4096x4096 pixels in total)

2D pinhole perspective camera (1 lens, 8192x4608 pixels in total)

Hexagonal lens array (54x63 lenses, 1920x1035 pixels in total)

Results ‒ 3D-scenes

A small assortment of the 3D-images and 3D-videos that have been synthesized with our tool is presented below. The images are downscaled for webb presentation but full scale images are available on request.

3D-scene definition

"Objects"

Images

|

|

|   "Cuboid" (128x64 rectangular lenses) |

|   "Dolphin" (Hexagonal lenses) |

Video

"HairdoOrtho" (2D parallel projection)

"HairdoLenticular" (Lenticular lenses)

"HairdoHexagonal" (Hexagonal lenses)

Publications

"A ray-tracing based interactive simulation environment for generating integral imaging video sequences"

Roger Olsson and Youzhi Xu,

Proceedings of Optics East - ITCOM - Three-dimensional TV, Video and Display IV, SPIE, October 2005

"A Ray-Tracing Based Simulation Environment for Generating Integral Imaging Source Material"

Roger Olsson and Youzhi Xu,

Proceedings of RVK, June 2005